Last weekend I finally took some of personal photos and artwork to “paint” them in, but not limited to, Van Gogh style. The “painting” part was actually an inspiring application from Deep learning research [1,2]. In short, the Deep learning model – a deep neural network – took your photo (referred as content-image henceforth) and a reference style (combination of 1 or more style-images) as the inputs and produce your photo in that style. A popular implementation of [1] is publicly provided [3].

Demo

Here we have the content-image is a photo of a walking path on the top-left, the style-image is an artwork by artist Leonid Afremov on the top-right, and the walking path generated in Afremov's style at the bottom.

The machine did not "paint" the picture out of the blue, it's an iterative learning process. In short, it starts with a blank (noisy) canvas and tries to learn prominent features in both content-image and style-image(s) and present them on the final product. That learning process is mathematically expressed as optimization process of a function representing the combined similarity between the output and the inputs. Optimizing i.e. minimizing such function requires algorithm which goes through series of iteration. These iterations are arguably the process of (machine) perception. The following gif is a visualization of that process.

More images

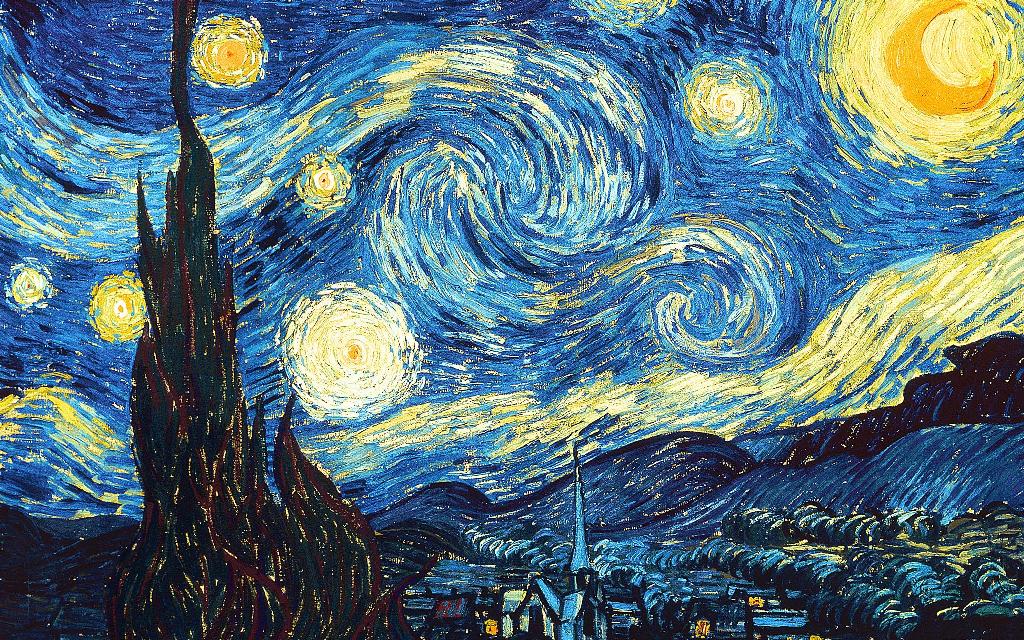

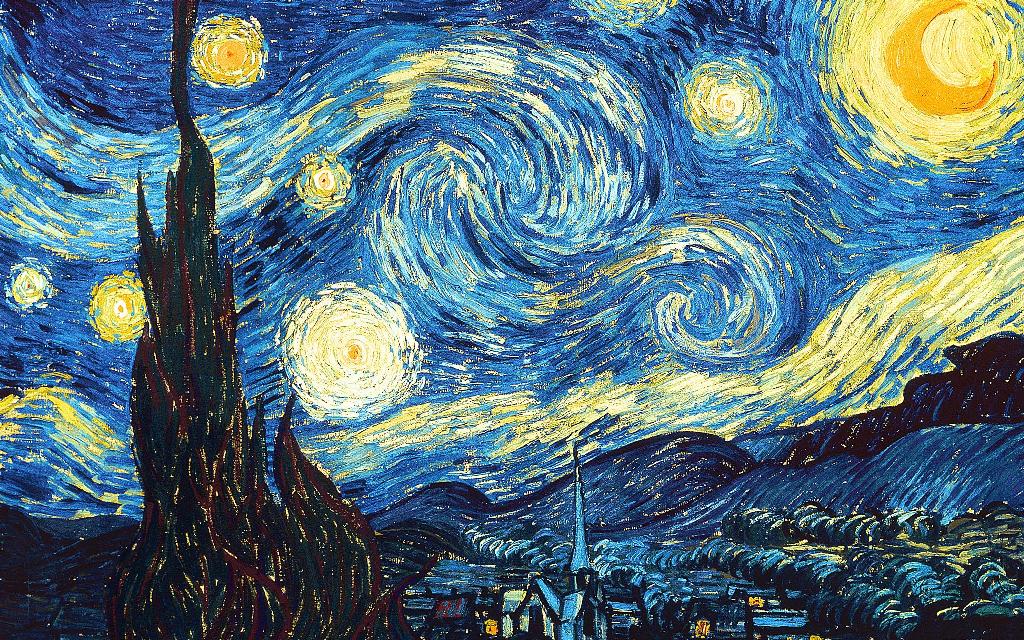

Near the quay + Van Gogh's Starry Night

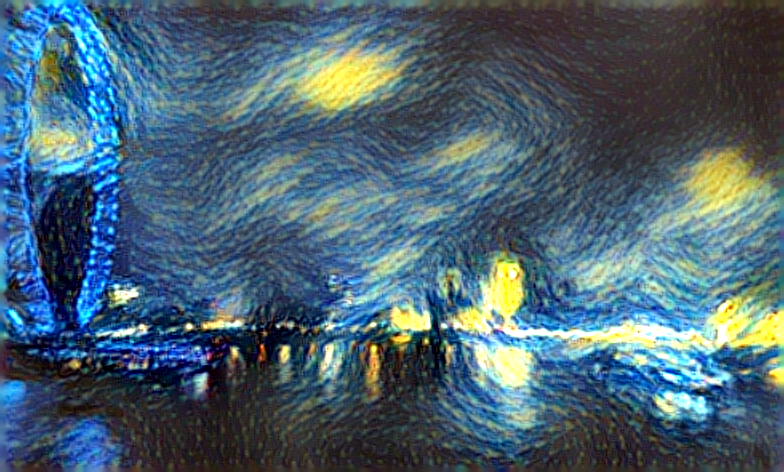

London + Van Gogh's Starry Night

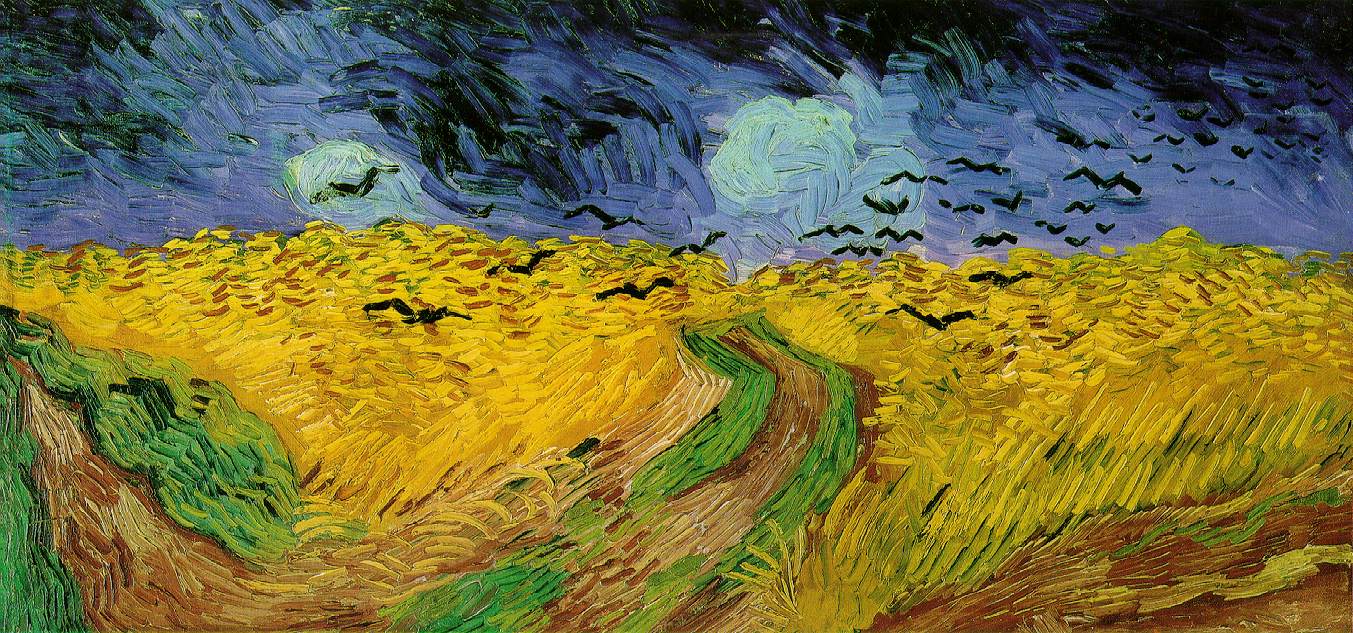

Field + Van Gogh's Wheat Field with Crows

Painting study + creativemints.at.behance's

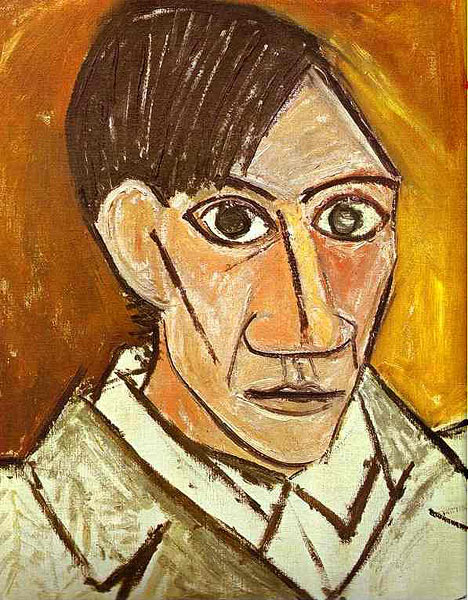

Painting study + Picasso's Self-portrait

=>

=>

Observation

- Size matters. The higher the output image resolution, the more details in the content-image can be explicitly expressed by the indicated style. However, memory is big trade-off. Default output image size (512px) on an NIN took as small as 5-600MB, but a 784px image devours 3 times as much!

- For visual appeal, (i) an expressive style and/or (ii) resemblance between content-style image pair is the key. “Expressive” here is not only about abstract or surreal in specific, but about texture i.e. discernible patterns in colors, strokes, blobs in general. Example work: Leonid Afremov’s painting, The Scream, Monet’s water lilies, … Eventually, using style-images referenced from complicated realism pieces by the old masters, like Jean Leon Gerome, usually yields unsatisfiying output with default parameters. Secondly, although it’s not always the case, but a landscape picture pairs pretty well with Starry Night or Wheat Field and the Crown; a portrait and Picasso’s self-portrait also makes a nice combination.

- I lust over a Titan X.

Hardware setup

On the technical bit, the main work-horse is a humble nVidia GTX 660 2GB on a dated LGA 775 desktop. GPU(s) with generous memory is desirable. I also used NIN instead of VGG net due to limited VRAM. Each image was generated in ~90 seconds (max. 512px-wide) or 2-3 mins (max. 784px-wide).

It's much faster to run on a GPU instead of CPU. Even a modern i7 K-series can take upto hour to train and generate 1 image of above sizes. However, running on CPU can take advantages of abundant host memory (easily >8GB on a single machine), not to mention the possibility to be accompanied with a coprocessor (eg: Xeon Phi), or running on distributed platform.

References

- A Neural Algorithm of Artistic Style http://arxiv.org/abs/1508.06576

- Artomatix (NB: this start-up has elevated a similar application as a side project in 2014, one year before paper [1] was published)

- https://github.com/jcjohnson/neural-style

- Artist credit where it's due

RSS Feed

RSS Feed